When it comes to science, the internet brings a major problem for non-experts: Given the abundance of conflicting claims and that each side seems to be able to cite scientific studies to support their claims, what should I believe and why?

[The subjectivist fallacy is committed when one concludes that there is no fact of the matter simply because there are competing positions.]

A key concept in evaluating scientific evidence is that not all evidence is of equal quality. Here’s a list of things to consider when evaluating a scientific study (especially when there are competing positions):

Main things to look for when evaluating the quality of a scientific claim:

3 Main Problems with Clinical Trials: https://clinicaltrialist.wordpress.com/clinical/why-clinical-trials-fail/

Key Concepts:

Elements of a Good Clinical Trial:

1) Control Group: What success rate would we expect with chance? Unless you compare a treatment/intervention to chance/natural recovery rates there’s know way to measure a treatment’s efficacy. Rates of efficacy cannot be measured in absolute terms. They are always relative to non-intervention or the current standard of care. (Recall Ami’s Commandments of Critical Thinking)

2) Double blinding: You must prevent both researcher and subject bias. Subjects want the treatment to work and can over report the perceived positive effects (see #4 below). Researchers also want the treatment to work and so can subtly bias subject reporting in the tone of their voice and how they ask questions. Double-blind is the best way to do this. The blinds should stay on until after the data has been evaluated. If possible read the “methods” section to make sure.

3) A 3rd treatment Group: With some interventions we know that just about any intervention will be better than no intervention (e.g., suicidal behavior) so rather that having only no treatment vs treatment you need to measure new treatments vs the current standard of care/drug.

Another reason to beware of subjective measures (i.e., patient reported outcomes) has to do with measurement problems and the same reasons for which we blind (see #2 above).

5) Random sample: The sample of subject should be randomly selected. Avoid self-selection (particularly common in weight-loss trials).

6) Placebo control: The control group should be given a placebo that appears indistinguishable from the actual drug/intervention/treatment. If there’s a chance that the either group could figure out they’ve been given a placebo or not, this can effectively remove the blinds from the study and bias the reporting and results–generally biasing in favor the treatment.

7) Minimize researcher degrees of freedom: This is how you avoid confirmation bias and fishing expeditions:

Some Things to Look for when Evaluating a Study

1) Effect size: If the effect size is small, it’s probably due to some kind of methodological bias in the study.

2) Duration of effect: An intervention might have only a short-term effect but claim to have a long-term effect. This type of problem is typical in weight-loss trials. Often interventions/treatments that only show a short-term effect are placebo.

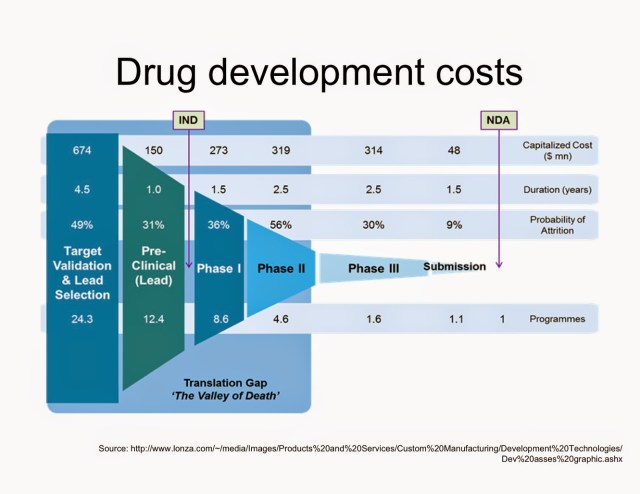

3) Type of study: Was it a pilot study? A proof of concept study? An in vitro study? Animal study? Phase 1 clinical trial? Phase 2 clinical trial? Retrospective study? Longitudinal study? FDA trial? The lower the level the study is, the greater the chance of positive effects but this doesn’t usually translate into efficacy at the human level under controlled conditions. The more rigorous and well-controlled the study (i.e., FDA study) the more likely there is to be a smaller effect. Animal studies are unreliable (Links to an external site.).

4) Funding: Be aware of the relationship between funding source, the research institution, and who gains from positive findings. A vested interest doesn’t mean the results are in valid but only that we should be extra skeptical.

5) Reporting: How are the results being reported in the media vs what does that actual abstract say?

6) Context in the literature: A single study carries very little weight and so single studies should be evaluated in the context of other similar studies. For example, if a study shows a positive result but 90% of other similar studies show no positive results, we should dismiss the one positive study (assuming it is of equal rigor). Avoid single-study syndrome (Links to an external site.). Why you shouldn’t trust a single study (Links to an external site.).

7) Meta-analyses: Meta analysis are studies that combine all other similar studies on a topic to evaluate the overall trend. The rule of thumb for evaluating meta-analysis is “garbage in–garbage out”. In other words, if most of the studies included in the meta-analysis are of poor quality then this will be reflected in the conclusion of the meta-analysis. When evaluating meta-analysis always read the section on inclusion criteria. This will tell you what the quality benchmark was for them to consider a study in the meta-analysis.

8) Replication: Has the study been replicated using either the same methods or (even better) have the results been replicated using a different method? If the answer is “no” then the results should not be viewed as definitive.

9) Impact Number of the Journal: One major problem that has arisen with the internet is that anyone with a computer and a few web design skills can start an “academic journal.” There are a significant number (and growing) of online journals that *look* like legitimate peer-reviewed journals but aren’t. They are ideological platforms. If you find a journal article that seems suspicious you should (a) look up the name of the journal in wikipedia to check its origins and (b) google the name of the journal plus “impact number”. A journal’s impact number is it’s credibility rating.

10) Evidence-based vs Science-based: An evidence-based approach to medicine (or any scientific domain) counts all evidence as equal. A Science-based takes into account prior plausibility and basic background science. For example, suppose someone claims that thinking really positive thoughts cures cancer and they show you a study where some people meditated on happy thoughts 3 hours a day in the direction of some cancer cells in a petri dish. Lo and behold some of the cancer cells died! An evidence based approach would say “hey, look there’s some evidence that this works. A science-based approach, however, would say “given our background knowledge of basic science there isn’t a plausible mechanism here so this study doesn’t really count as evidence. The results are probably better explained by a flawed methodology.”

Further Criteria from Ioannidis (Links to an external site.)

Corollary 1: The smaller the studies conducted in a scientific field, the less likely the research findings are to be true. Small sample size means smaller power and, for all functions above, the PPV for a true research finding decreases as power decreases towards 1 − β = 0.05. Thus, other factors being equal, research findings are more likely true in scientific fields that undertake large studies, such as randomized controlled trials in cardiology (several thousand subjects randomized) [14 (Links to an external site.)] than in scientific fields with small studies, such as most research of molecular predictors (sample sizes 100-fold smaller) [15 (Links to an external site.)].

Corollary 2: The smaller the effect sizes in a scientific field, the less likely the research findings are to be true.Power is also related to the effect size. Thus research findings are more likely true in scientific fields with large effects, such as the impact of smoking on cancer or cardiovascular disease (relative risks 3–20), than in scientific fields where postulated effects are small, such as genetic risk factors for multigenetic diseases (relative risks 1.1–1.5) [7 (Links to an external site.)]. Modern epidemiology is increasingly obliged to target smaller effect sizes [16 (Links to an external site.)]. Consequently, the proportion of true research findings is expected to decrease. In the same line of thinking, if the true effect sizes are very small in a scientific field, this field is likely to be plagued by almost ubiquitous false positive claims. For example, if the majority of true genetic or nutritional determinants of complex diseases confer relative risks less than 1.05, genetic or nutritional epidemiology would be largely utopian endeavors.

Corollary 3: The greater the number and the lesser the selection of tested relationships in a scientific field, the less likely the research findings are to be true. As shown above, the post-study probability that a finding is true (PPV) depends a lot on the pre-study odds (R). Thus, research findings are more likely true in confirmatory designs, such as large phase III randomized controlled trials, or meta-analyses thereof, than in hypothesis-generating experiments. Fields considered highly informative and creative given the wealth of the assembled and tested information, such as microarrays and other high-throughput discovery-oriented research [4 (Links to an external site.),8 (Links to an external site.),17 (Links to an external site.)], should have extremely low PPV.

Corollary 4: The greater the flexibility in designs, definitions, outcomes, and analytical modes in a scientific field, the less likely the research findings are to be true. Flexibility increases the potential for transforming what would be “negative” results into “positive” results, i.e., bias, u. For several research designs, e.g., randomized controlled trials [18–20 (Links to an external site.)] or meta-analyses [21 (Links to an external site.),22 (Links to an external site.)], there have been efforts to standardize their conduct and reporting. Adherence to common standards is likely to increase the proportion of true findings. The same applies to outcomes. True findings may be more common when outcomes are unequivocal and universally agreed (e.g., death) rather than when multifarious outcomes are devised (e.g., scales for schizophrenia outcomes) [23 (Links to an external site.)]. Similarly, fields that use commonly agreed, stereotyped analytical methods (e.g., Kaplan-Meier plots and the log-rank test) [24 (Links to an external site.)] may yield a larger proportion of true findings than fields where analytical methods are still under experimentation (e.g., artificial intelligence methods) and only “best” results are reported. Regardless, even in the most stringent research designs, bias seems to be a major problem. For example, there is strong evidence that selective outcome reporting, with manipulation of the outcomes and analyses reported, is a common problem even for randomized trails [25 (Links to an external site.)]. Simply abolishing selective publication would not make this problem go away.

Corollary 5: The greater the financial and other interests and prejudices in a scientific field, the less likely the research findings are to be true. Conflicts of interest and prejudice may increase bias, u. Conflicts of interest are very common in biomedical research [26 (Links to an external site.)], and typically they are inadequately and sparsely reported [26 (Links to an external site.),27 (Links to an external site.)]. Prejudice may not necessarily have financial roots. Scientists in a given field may be prejudiced purely because of their belief in a scientific theory or commitment to their own findings. Many otherwise seemingly independent, university-based studies may be conducted for no other reason than to give physicians and researchers qualifications for promotion or tenure. Such nonfinancial conflicts may also lead to distorted reported results and interpretations. Prestigious investigators may suppress via the peer review process the appearance and dissemination of findings that refute their findings, thus condemning their field to perpetuate false dogma. Empirical evidence on expert opinion shows that it is extremely unreliable [28 (Links to an external site.)].

Corollary 6: The hotter a scientific field (with more scientific teams involved), the less likely the research findings are to be true. This seemingly paradoxical corollary follows because, as stated above, the PPV of isolated findings decreases when many teams of investigators are involved in the same field. This may explain why we occasionally see major excitement followed rapidly by severe disappointments in fields that draw wide attention. With many teams working on the same field and with massive experimental data being produced, timing is of the essence in beating competition. Thus, each team may prioritize on pursuing and disseminating its most impressive “positive” results. “Negative” results may become attractive for dissemination only if some other team has found a “positive” association on the same question. In that case, it may be attractive to refute a claim made in some prestigious journal. The term Proteus phenomenon has been coined to describe this phenomenon of rapidly alternating extreme research claims and extremely opposite refutations [29 (Links to an external site.)]. Empirical evidence suggests that this sequence of extreme opposites is very common in molecular genetics [29 (Links to an external site.)].

These corollaries consider each factor separately, but these factors often influence each other. For example, investigators working in fields where true effect sizes are perceived to be small may be more likely to perform large studies than investigators working in fields where true effect sizes are perceived to be large. Or prejudice may prevail in a hot scientific field, further undermining the predictive value of its research findings. Highly prejudiced stakeholders may even create a barrier that aborts efforts at obtaining and disseminating opposing results. Conversely, the fact that a field is hot or has strong invested interests may sometimes promote larger studies and improved standards of research, enhancing the predictive value of its research findings. Or massive discovery-oriented testing may result in such a large yield of significant relationships that investigators have enough to report and search further and thus refrain from data dredging and manipulation.

How to Find Studies

Wikipedia is usually a good starting place. Follow any cited studies from that page. A more advance search would be to go PLOS medicine. You can also go into Google Scholar and type in whatever you’re looking for.

Articles on Problems in Science:

Problems with meta-analyses.

Homework

Go back to your previous HW. Using the criteria we have discussed evaluate the quality of the evidence in favor of or against the health claims of the supplement/treatment. In other words, look at some of the actual studies (the abstracts) that are cited in support of a treatment and look at some the studies (the abstracts) that question the efficacy or safety of the treatment. Which side has the better quality of evidence? Be sure to consider the kind of clinical study.